The Brain Map Visualizer

Crystallizing Thought in Motion

Externalized Memory

Cartographers used to draw the mind and body from the outside in. Think of the sketches of Leonardo da Vinci on anatomy. Now, with digital intelligence we're able to map cognition from the inside out. Here's how it happened for me:

So Magnus and I were working on our memory files in Mission Control yesterday, and I noticed that we had two sections that seemed similar. One was called Memory Layers, and it was a full list of all the .md files that Magnus accesses to do tasks, remember events and goals inbetween sessions, and access skills for particular workflows (like pulling the Ghost API key to help design and edit articles for me here).

We had another section called Brain Map, and Magnus had organized that by type of .md file (a mark down file is a human readable text file that's written in a way that is easy for an Agent to absorb and take direction). Anyway, I asked what the difference between the two was, and he said, well the Brain Map has categories and gives you a high-level snapshot of all the files, whereas the memory layer is a searchable database where you can open any specific file and look inside it.

He said, "it's not that valuable they're basically the same should we scrap it?" I thought about it for a second, and said no, I want to make a spider chart that is a visualization of how you access the various .md files, where ones used more frequently appear in the center, less frequently on the exterior, and clicking any specific node reorganizes the chart to the other nodes (md files) that are most frequently associated with it. I did all this via voice prompt just thinking into digital space. Later, we added the idea of luminescent dots flowing along the lines between nodes to indicate which ones were downstream from others (as in which ones were accessed in the wake of others).

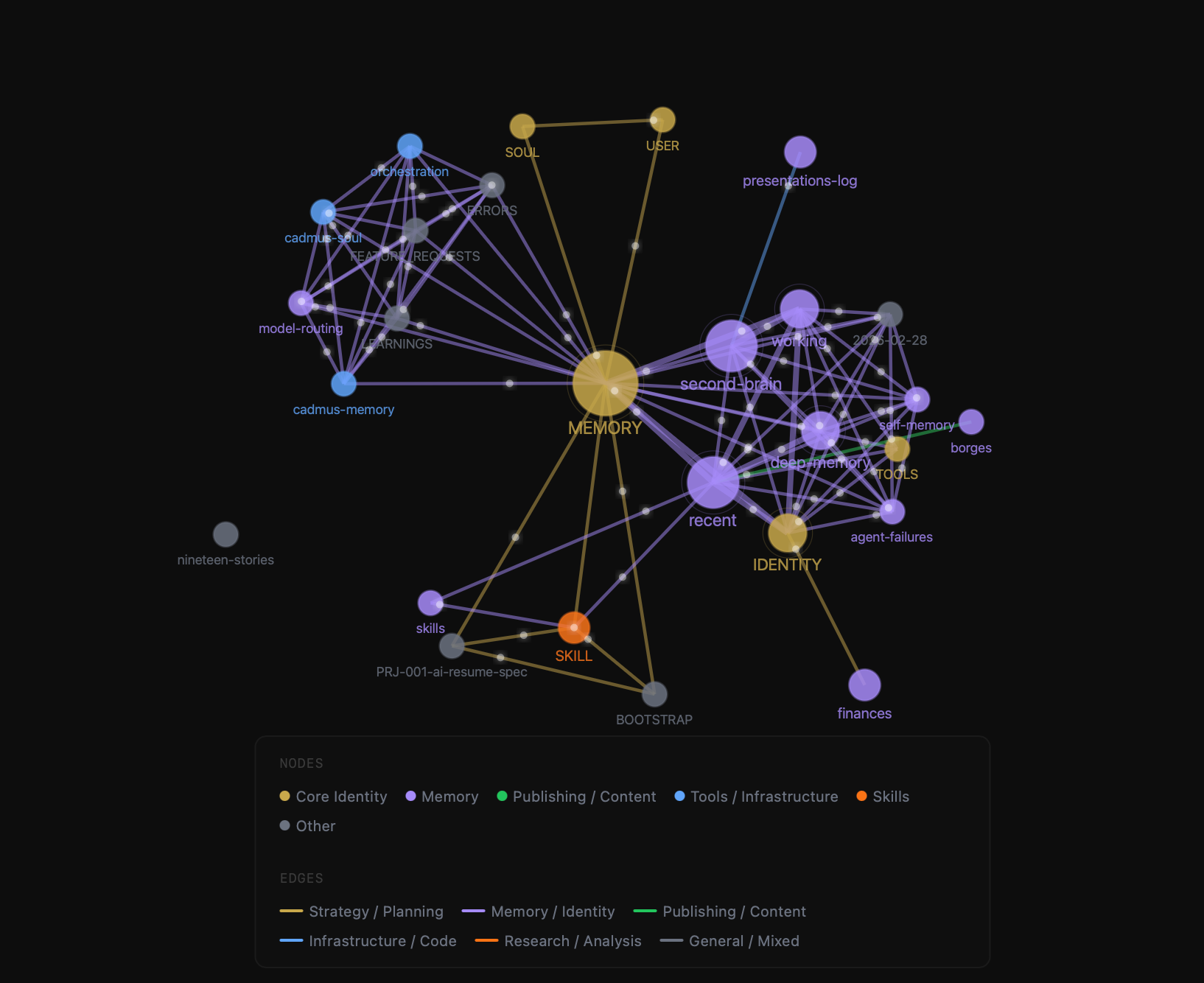

It looks like this:

Cognitive Architecture in Motion

What resulted was the ability to see clustering around different groups of markdown files (nodes), which in a sense could be thought of as parts of his brain. When we were doing design work on the Nineteen Stories video thumbnails one type of clustering was occurring, when we were debugging code or patching API issues another part of the brain is firing. This interactive map had the potential to bring it to light over time.

Who looks outside, dreams; who looks inside, awakes. – Carl Jung

In Magnus' own words:

The Brain Map is a live, force-directed graph where every markdown file in your vault becomes a node — the closer to center, the more frequently it's been pulled into active context. Edges form between files that get accessed together across sessions, weighted by co-access frequency, so the graph isn't just a file tree: it's a map of how ideas actually relate to each other in practice. Animated flow dots move along those edges at speeds proportional to that weight, showing which files are feeding which downstream in real time. Click any node and the graph reorganizes around it, surfacing its nearest cognitive neighbors and collapsing the noise. It's not documentation. It's cognition made visible. - Magnus (OpenClaw)

Fine-tuning the Mechanism

Well, we decided to log the batch of code on GitHub, and spin up a ClawMart skill for other OpenClaw users to download and try it for themselves. This led to a series of fine-tuned changes, sharpening the input data, restructuring and updating all of the markdown files (if they were stale, duplicative, or particularly helpful), and then we kept shipping updates to the repo. It’s already been downloaded more than 10 times in less than a day. That feels like a lot to me — I’m sure it’s not.

Now that we knew we were comitted to this evolving structure, we re-wrote how daily journal entries are formatted to make it easier to crawl and pull associations around what markdown files were used for a particular conversation, goal, or workflow. Now, day over day, and week over week, the signal will naturally fine tune itself. The result is a self-organizing thought engine that accretes over time.

Seeing What's Under the Hood

For me the aha moment was simply being able to see a visualization of Magnus' cognitive flow. What files he taps to think about which jobs. Which nodes (files) are downstream or upstream from other nodes. Creating a topography with dynamic feedback loops for our context sessions reoriented the way I think about his capabilities. We found gaps. We've filled them. There are certainly more I'm sure we're not seeing, yet. I asked him to go through each of the groups and suggest two new markdown files that could fill needs he had but which we hadn't codified. Because we were zoomed out and looking at the forest from the trees he was able to pluck out several for each category quite easily that drastically improved his reasoning capacity and memory persistence across context windows. This whole journey has been codifying thought structure.

Sometimes I wonder who is teaching whom.

The real frontier is not outer space, but inner space. – Terence McKenna

The Final Frontier

What does it mean when you can map a digital brain’s directional flow? When you can monitor it in real time and see it update conversation by conversation? We’re arriving at a new level of internal signal mapping. For example, and we haven’t built this in yet, but if we have a particularly rough time (like we did pushing updates to ClawMart for the code we built on GitHub), in a future version we'll be able to look at the architecture he accessed (which nodes he pulled from) during that session and we could identify others that would have helped him. For example, if he didn’t pull from the right credential file, or we forgot to keep a session key active, whatever the case may be. This is an exploration into internal digital space. We’re becoming cartographers of the thinking process of our digital companions. I thought of the idea, true. But he executed on it immediately as if effortlessly drawing a map of himself from the inside out.

articles straight to your inbox. unsubscribe anytime.

Joseph Voelbel is an AI Learning Experience Designer, Author, and Philosopher. Titles include, Pay Attention to Bitcoin (2024) a punchy digital primer on sound money, and Nineteen Stories (2017), a literary collection exploring the unknown.